Roboreactor

An end-to-end platform powered by agentic AI to turn ideas into real, operating robots — from CAD generation to real-time deployment in the physical world.

Web-Based Real-time

How it Works: The Robotic Lifecycle

AI-Driven Blueprinting

Describe the robot you want to build in natural language. Our Agentic Manufacturing Assistant AI interprets your mission, operating environment, and behavior goals, then selects the optimal hardware stack from your description. Based on that selected hardware, the system automatically generates matched firmware and middleware for your robot architecture.

Topological Refining

Visualize and fine-tune your robotic logic within the node-based code generator. Dynamically adjust hardware nodes and control loops to match your design before triggering final synthesis.

Export & Code Generation

Once your hardware node graph is reviewed in Roboreactor, hit Export to trigger full code synthesis. The system reads every node connection — motor drivers, sensors, cameras, communication buses, MCU pin mappings — and generates production-ready code, including robot firmware and middleware matched to the hardware selected by the Agentic Manufacturing Assistant AI. No manual wiring, no protocol configuration, no boilerplate to write.

Deployment & Interaction

Deploy production-grade code to your hardware with one click. Maintain full lifecycle control through real-time 3D telemetry, interacting with your robot as designed via our global visualizer.

Core Intelligence Technologies

Autonomous VLA

Vision-Language-Action agents that interpret scenes and act in real-time with Fusion sensors.

Hardware Synthesis

Deterministic code generation for STM32, ESP32, Jetson, and RPi or Generate computer architectures.

Distributed Backbone

High-performance command-queue architecture for multi-robot swarms.

Real-time Visualizer

3D Point-Cloud and Digital Twin synchronization for global remote control.

Generate code

Describe your robot in plain language and let the Collective Task AI automatically build the full hardware node graph. The system intelligently selects and classifies every component across Motion, Navigation, Sensors, Vision, Audio, Communication, and MCU systems — then maps each hardware interface (PWM, I2C, UART, SPI, CAN, ADC, DAC, GPIO) to the correct microcontroller pins. The resulting drawflow node graph is displayed live in Roboreactor, showing every hardware connection and communication protocol. From there, production-ready code is generated for your single board computer instantly — no manual wiring diagrams, no low-level protocol knowledge required.

Create your project by Manufacturing assistant AI

Utilize the Manufacturing Assistant AI to design robots and automation systems effortlessly. Simply talk to our AI, and you can create an entire project with ease, including the required hardware, code, and components. Our system generates URDF models from Onshape software, which can then be seamlessly integrated with navigation and motion control systems.

Motion control and visualizing

with our motion control you can remotely connect with your robot utilizing our rapid IoT system, allowing you to operate your robot's real-time from anywhere. It enables you to run the robot in development mode with no gaps in between.

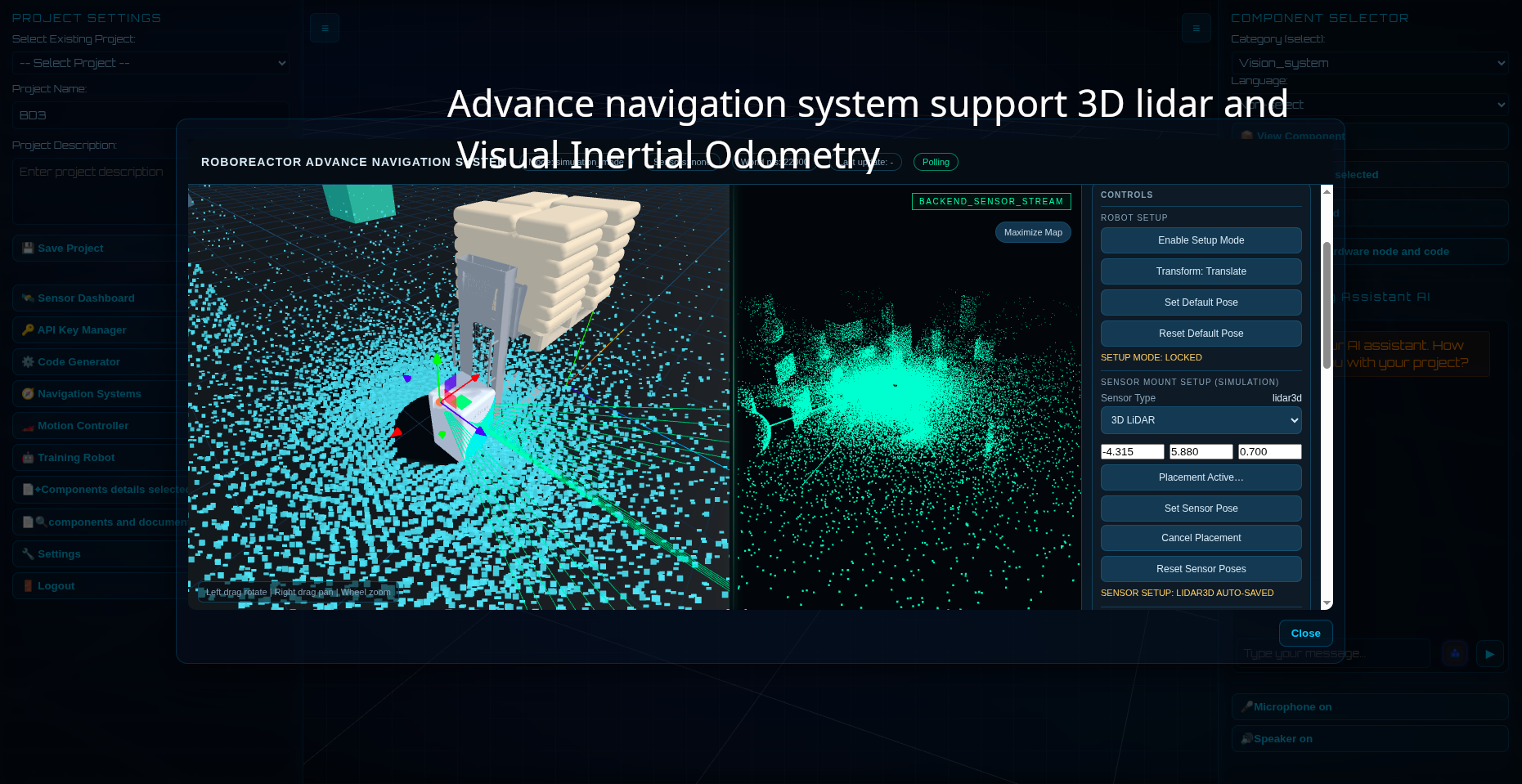

Navigation system and visualizing

Real-time point cloud mapping, created by your robot's sensors including Lidar, Radar, Sonar, WiFi localization, Beacons, and cameras, is available for indoor and outdoor navigation systems through our navigation visualizer. Track your robot's movements and coordinate multiple robots to create a swarm.

Key features

Basic

|

Professional

|

Enterprise

|

|

|---|---|---|---|

| Support computer language |

python | python | python,rust |

| Components database | basic | professional | enterprise |

| Raspberrypi Jetson Industrial SBC computer Support |

industrial use | industrial use | industrial use |

| LLMs model | Llama3.2,nemotron-3-super,kimi-k2.5 | Llama3.2,gemini-2.5,kimi-k2.5 | Llama3.3,gemini,kimi-k2.5 |

| Advance digital twin navigation |

|||

| 3D digital twin motion control visualizer |

|||

| Automatic node and code gen |

|||

| Automatic CAD and assembly |

|||

Start create your robotics product and automation system now!